Python's map() and filter(): The generators you didn't know you needed.

WHY ARE WE STILL LOOPING MANUALLY?

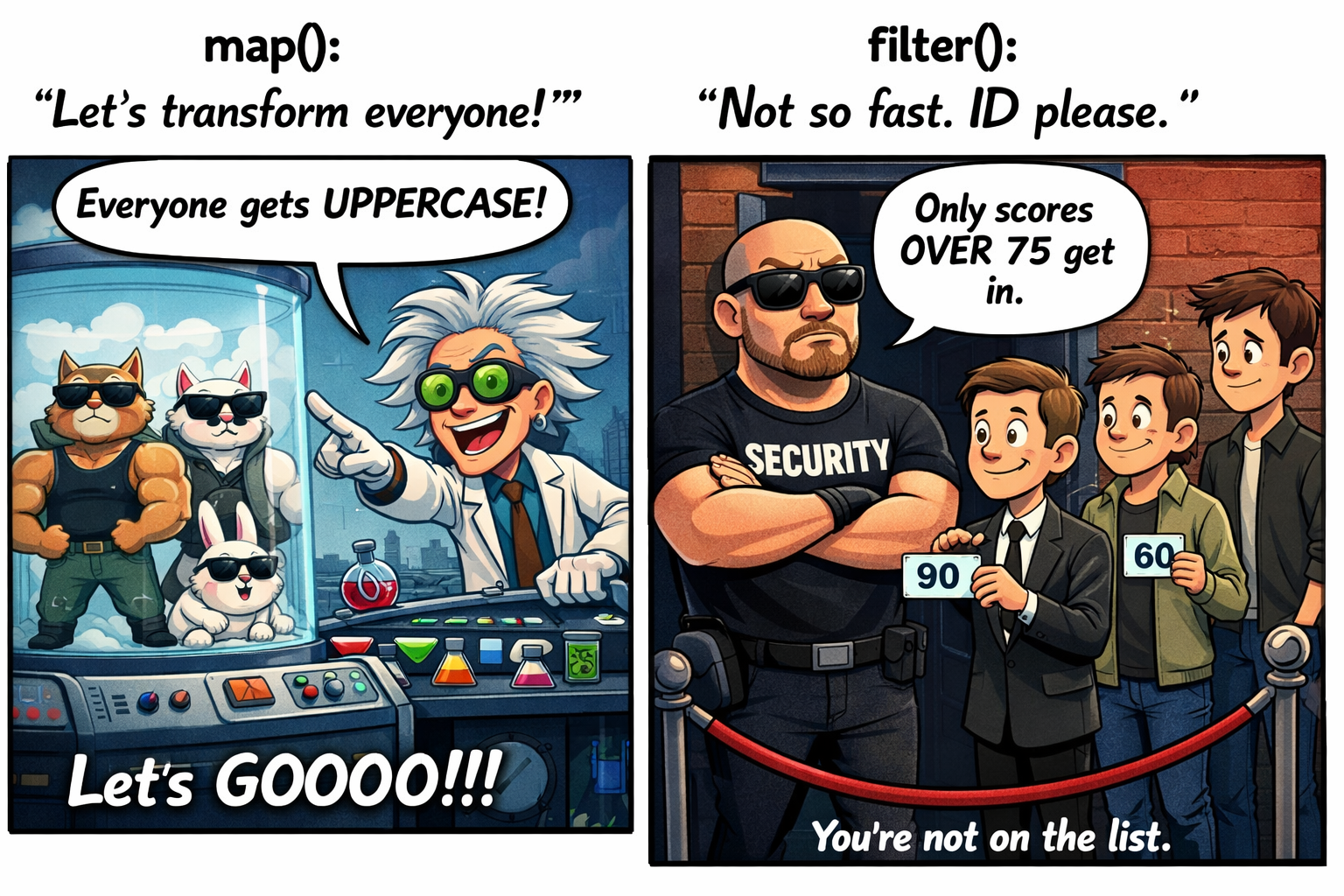

So, you’re still using for loops to transform or select every single element in your list? That’s so 2010. Let’s talk about map() and filter(), the high-order functions that are basically the ‘efficient friends’ who only do work when absolutely necessary.

PART 1: THE MAP()

The map() function is used to apply a function to each element of an iterable, producing a new transformed sequence.

The old-school way (I have too much time on my hands)

my_pets = ['alfred', 'tabitha', 'william', 'arla']

uppered_pets = []

for pet in my_pets:

pet_ = pet.upper()

uppered_pets.append(pet_)

print(uppered_pets)

# Output: ['ALFRED', 'TABITHA', 'WILLIAM', 'ARLA']

The functional way (To reuse the logic "")

def upper_pets(pet_list):

uppered_pets = []

for pet in pet_list:

pet_ = pet.upper()

uppered_pets.append(pet_)

return uppered_pets

my_pets = ['alfred', 'tabitha', 'william', 'arla']

result = upper_pets(my_pets)

print(result)

# Output: ['ALFRED', 'TABITHA', 'WILLIAM', 'ARLA']

The "fancy" pythonista way (List Comprehension)

my_pets = ['alfred', 'tabitha', 'william', 'arla']

uppered_pets = [pet.upper() for pet in my_pets]

print(upper_pets)

# Output: ['ALFRED', 'TABITHA', 'WILLIAM', 'ARLA']

The map() way (Work Smarter, not harder)

def upper_pet(pet):

return pet.upper()

my_pets = ['alfred', 'tabitha', 'william', 'arla']

uppered_pets = list(map(upper_pet, my_pets))

print(uppered_pets)

# Output: ['ALFRED', 'TABITHA', 'WILLIAM', 'ARLA']

The map() way (with a lambda — work smarter, not harder)

my_pets = ['alfred', 'tabitha', 'william', 'arla']

uppered_pets = list(map(lambda pet: pet.upper(), my_pets))

print(uppered_pets)

# Output: ['ALFRED', 'TABITHA', 'WILLIAM', 'ARLA']

The pythonista way to create a (Generator Comprehension)

my_pets = ['alfred', 'tabitha', 'william', 'arla']

uppered_pets = (pet.upper() for pet in my_pets)

uppered_pets = list(uppered_pets)

print(uppered_pets)

# Output: ['ALFRED', 'TABITHA', 'WILLIAM', 'ARLA']

⚡ Reminder: map() is a generator-like iterator. In Python 3, it returns a lazy iterator. It computes values only when needed and can be consumed only once!

PART 2: THE FILTER()

While map() transforms everyone, filter() is the picky bouncer at the club. It selects elements from an iterable based on a specific condition and only lets the ones that satisfy it through.

The old-school way (I have too much time on my hands)

scores = [66, 90, 68, 59, 76, 60, 88, 74, 81, 65]

over_75 = []

for score in scores:

if score > 75:

over_75.append(score)

print(over_75)

# Output: [90, 76, 88, 81]

The functional way (To reuse the logic "")

def filter_scores(score_list):

filtered_scores = []

for score in score_list:

if score > 75:

filtered_scores.append(score)

return filtered_scores

scores = [66, 90, 68, 59, 76, 60, 88, 74, 81, 65]

over_75 = filter_scores(scores)

print(over_75)

# Output: [90, 76, 88, 81]

The "fancy" pythonista way (List Comprehension)

scores = [66, 90, 68, 59, 76, 60, 88, 74, 81, 65]

over_75 = [score for score in scores if score > 75]

print(over_75)

# Output: [90, 76, 88, 81]

The filter() way (Work Smarter, not harder)

def is_over_75(score):

return score > 75

scores = [66, 90, 68, 59, 76, 60, 88, 74, 81, 65]

over_75 = list(filter(is_over_75, scores))

print(over_75)

# Output: [90, 76, 88, 81]

The filter() way (with a lambda — work smarter, not harder)

scores = [66, 90, 68, 59, 76, 60, 88, 74, 81, 65]

over_75 = list(filter(lambda score: score > 75, scores))

print(over_75)

# Output: [90, 76, 88, 81]

The pythonista way to create a (Generator Comprehension)

scores = [66, 90, 68, 59, 76, 60, 88, 74, 81, 65]

over_75 = (score for score in scores if score > 75)

over_75 = list(over_75)

print(over_75)

# Output: [90, 76, 88, 81]

⚡ Reminder: filter() is ALSO a generator-like iterator. Just like map(), calling filter(lambda x: x > 10, numbers) does not return a list. It returns a lazy filter object that evaluates items only when needed.

THE BIG REVEAL: WHY ARE THEY SO LAZY?

In Python 3, both map() and filter() return Iterators. This makes them incredibly similar to Generators:

- Memory Efficiency: They don't store the whole result in memory. Great for processing millions of items without melting your RAM.

- Lazy Evaluation: They only calculate the "next" item when you actually ask for it (e.g., when you loop over them or convert them to a list).

- One-Hit Wonders: Once you've iterated through a map or filter object, it's empty. You can't reuse it.

SUMMARY

- map() = Applies a function to every element.

- filter() = Keeps only the elements that meet a condition.

- Lazy Objects: Both return lazy iterators, not lists. Wrap them in

list()if you need the full result immediately. - Efficiency: They are the secret weapon for handling large datasets efficiently.

Stop being eager. Be lazy. Be a Python Pro.